AI-Cademy Canada: Five Takeaways on AI in Higher Education

Good morning from Vancouver!

It’s a beautiful day—sunshine, crisp air, and snow-capped mountains in the distance. I mention this not to gloat, but as a reminder that even the most talented forecasters can’t perfectly predict the future. It was supposed to rain for 14 straight days, yet here we are with four sunny days in five. Did you know that a four day forecast now is as accurate as a one day forecast was 30 years ago?

That unpredictability is something we also see in higher education, especially when it comes to AI. A couple of weeks ago, I had the pleasure of presenting at and attending the AI-Cademy Canada Summit for Post-Secondary Education, hosted by our friends at the Higher Education Strategy Associates. It was a fantastic event, bringing together exactly the kind of people I always hope to meet:

For someone like me—who wears multiple hats as both an ed-tech co-founder at Plaid Analytics and an adjunct professor at UBC—this mix of perspectives was incredibly valuable.

Together with my colleague Pat Lougheed, we walked away with five key takeaways on how AI is shaping the future of higher education (and while she wasn't there, our lead writer Melinda Roy is always helping me with telling better stories, so thanks, Melinda). .

1️⃣ AI Can Be a Unifying Force in Higher Education

New technologies in the last 15 years have often created silos rather than eliminated them. AI, however, has the potential to break down barriers between departments.

Whether it’s improving decision-making, streamlining administrative processes, or unlocking insights that were previously out of reach, AI offers something for every part of an institution. If implemented thoughtfully, it can serve as a bridge—rather than another isolated system—helping different teams work more effectively together.

2️⃣ AI Literacy is a Real Concern for Senior Leadership

Senior leaders are under increasing pressure to adopt AI within their institutions. The challenge? Many don’t have direct experience with these technologies.

That makes it difficult to model best practices, assess risks, and determine the right level of professional development for their teams. Despite this, there’s a growing curiosity among senior leaders about how AI can enhance strategic enrolment management, academic planning, and student success initiatives.

3️⃣ Building AI Capacity is No Small Task

AI requires new skills, tools, and processes—and building that capacity is no trivial effort.

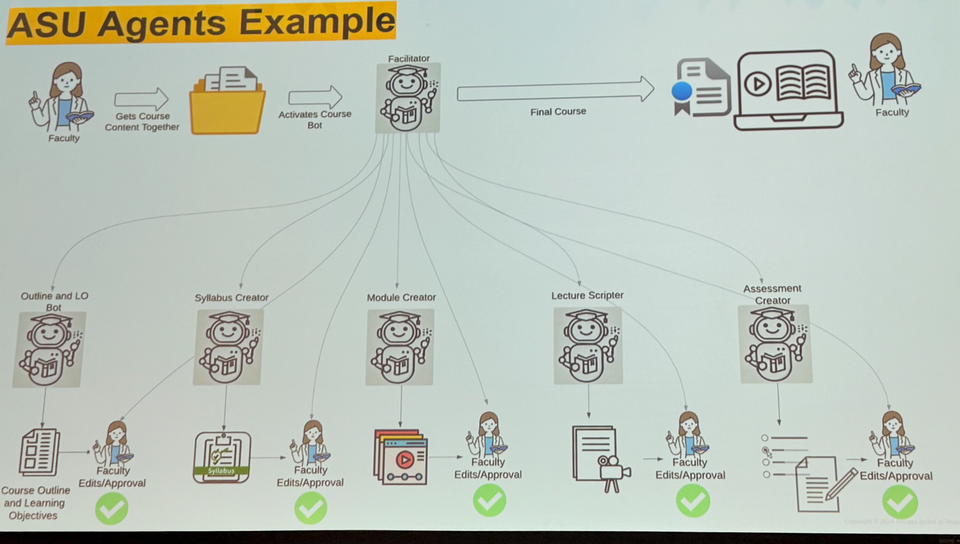

A standout moment at the conference was a keynote by Lev Gonick, CIO at Arizona State University (ASU). ASU is a trailblazer in AI, designing large-scale implementations that balance innovation with faculty oversight.

For example, they’ve developed a multi-agent AI system for course development:

- One agent facilitates the process

- One focuses on learning outcomes

- One builds the syllabus

- One structures course modules

- One generates lecture content

- One designs assessments

At every stage, faculty members provide approval and edits—ensuring AI serves as an assistant, not a replacement.

I couldn’t help but reflect on my own experience as an instructor. Course prep takes up 80% of my time—time that could arguably be better spent engaging with students. AI-powered tools like this could free up faculty time while maintaining (or even improving) academic rigor.

But AI innovation isn’t limited to large universities. I had great breakfast conversations with faculty from the University of Prince Edward Island (UPEI) (5,816 students), who are using AI in creative ways.

One example: peer feedback enhancement. Students use AI tools to analyze and improve the quality of their feedback—helping them move beyond generic comments like “You’re doing great!” to more constructive and actionable critiques.

Another example: AI-driven exploration of knowledge. Students can: ✅ Drill deeper into a topic ✅ See how concepts connect to broader themes ✅ Get quizzed in an interactive, adaptive way

And yes, they can even set the AI’s “sass level” depending on how much they want to be challenged!

All this said, there are legitimate concerns around copyright, publishing, and reuse rights for training content-generating AI, which is relevant not just for big AI tools, but even for internal AI tools.

In the context of generating context for a lecture, some questions to ponder are:

- Where is the content coming from, who inputs it, and what rights do they have to do that relative to the copyright owner.

- Does the institution validate the information they've trained the model on only contains content that owners have consented to be used for that purpose, and have agreements for the number of uses and lengths of time.

- If faculty upload their own work, do they have control over whether their work is used, stored, or purged after their lecture or lessons?

- Ultimately, LLMs use content created by other humans (and more recently other LLMs that were originally using content created by humans) and the institution may not have all acquired all the apropriate copyrights or AI-usage rights (perpetual storage, use, reuse, citation, and fair dealing/payment for model-training). This is still an area where copyright law is failing writers, researchers, and other creators. While this is less likely a concern for AI's like TritonGPT where the content input has been created on and for the purpose of institutional admin services, it is a valid concern for lecture content and assessment design generators.

4️⃣ AI for University Administration is Still in Its Infancy

While AI in teaching and learning is advancing rapidly, AI in university operations is still catching up.

One promising example we saw was using AI to streamline Institutional Research Board (IRB) applications. If you’ve ever gone through an IRB review process, you know how complex it can be (and frustrating, in my experience) . AI can help by guiding researchers through the submission process, identifying missing information, and ensuring compliance—saving time for both applicants and reviewers.

At Plaid Analytics, we understand the privacy and data security concerns around AI. Universities need solutions that prioritize security, confidentiality, and ethical use. There are a variety of techniques to achieve this goal, including local models, those deployed securely in the cloud, or the well known models but with appropriate guard rails in place.

A great example of this is TritonGPT at UC San Diego:

“TritonGPT is a powerful tool that helps students navigate UC San Diego's policies, procedures, and campus life. It's like having a personal assistant who knows everything about the university.”

The potential here is huge. Imagine AI helping students find the right services, guiding faculty through administrative processes, and supporting staff with complex workflows—without sacrificing privacy.

5️⃣ AI is Reshaping Traditional Teaching

In disciplines like analytics (where I teach), AI can now complete entire assignments in seconds. But the real challenge is not getting AI to do the work—it’s developing the critical thinking skills needed to question its outputs, recognize biases, and make informed decisions.

As part of the final day, there was panel on Indigenous Knowledge and AI: Tensions, opportunities, and responsibilities, moderated by Ian Cull. One of the speakers, Lindsay DuPré, mentioned that one of the key differences between Indigenous perspectives on knowledge and Western perspectives on knowledge is how they're viewed: in many Indigenous cultures, knowledge is seen as a relation, while Western culture views knowledge as something to be acquired and consumed.

The conversation at AI-Cademy wasn’t about catching students using AI—it was about how AI can be leveraged to enrich learning.

Following the conference, I had a chance to learn a bit more about the First People's Principles of Learning, and believe that considering some of these principles could be an effective way to bring AI more intentionally into the classroom (while acknowledging that AI isn't currently well suited for learning Indigenous language or history).

I repeat these principles below in full:

- Learning ultimately supports the well-being of the self, the family, the community, the land, the spirits, and the ancestors.

- Learning is holistic, reflexive, reflective, experiential, and relational (focused on connectedness, on reciprocal relationships, and a sense of place).

- Learning involves recognizing the consequences of one‘s actions.

- Learning involves generational roles and responsibilities.

- Learning recognizes the role of Indigenous knowledge.

- Learning is embedded in memory, history, and story.

- Learning involves patience and time.

- Learning requires exploration of one‘s identity.

- Learning involves recognizing that some knowledge is sacred and only shared with permission and/or in certain situations.

(Citation: First Nations Education Steering Committee and BC Ministry of Education (2006-2007): https://www.fnesc.ca/first-peoples-principles-of-learning/)

Here’s how AI can support these ideas in the classroom:

🔹 AI for storytelling and reflection: AI can help students explore knowledge through multiple lenses. Instead of simply producing a report, students can interact with AI to see different perspectives, refine their arguments, and engage in deep reflection—mirroring oral storytelling traditions that emphasize learning through dialogue.

🔹 AI for interactive learning: Students at the University of Prince Edward Island are using AI to evaluate and improve peer feedback—ensuring it goes beyond surface-level praise to offer meaningful, constructive guidance. This aligns with learning as a shared, community-based process, where students learn with and from each other.

🔹 AI for self-paced, experiential learning: AI can adapt to individual learners’ needs, challenging them at their own level. One approach we discussed at the conference is AI-driven exploratory learning:

- Going deeper into a topic to understand the nuances

- Seeing what’s connected to the subject, helping students understand broader contexts

- Being quizzed interactively —students can even adjust the AI’s tone to be more encouraging or more challenging, reinforcing self-directed learning

🔹 AI for ethical and responsible knowledge creation: A big discussion point was ensuring students engage critically with AI rather than blindly accepting its outputs. This resonates with the principle that learning requires exploration of identity, worldviews, and ethics. AI can be a tool for unpacking biases, questioning assumptions, and encouraging respectful engagement with diverse perspectives.

In this way, AI isn’t replacing traditional education—it’s evolving it into something more dynamic, relational, and personalized, while still supporting the foundational principles of reflection, community, and ethical responsibility in learning.

Final Thoughts

AI is changing everything in higher education, but that doesn’t mean every institution needs to dive in headfirst. Thoughtful, strategic adoption is key.

At Plaid Analytics, we’re working with universities to ensure AI is useful, ethical, and impactful—whether in enrolment forecasting, strategic planning, or institutional analytics.

The AI-Cademy Canada Summit reinforced something we strongly believe: AI isn’t just about automation—it’s about unlocking new possibilities for students, faculty, and staff alike.

What are your thoughts? How is AI being used (or not) at your institution? Let’s continue the conversation.

Want to chat about AI in higher education? Request a meeting with Andrew: